pganalyze MCP Server is now in Public Preview

Like many of you, we see benefits to use AI tools in our database workflows, but at the same time worry about the risks. Pasting query output into a chat box risks leaking customer data, and pointing an agent at a live production connection can lead to worse. The pganalyze MCP Server takes a different approach: it gives the model curated access to the production database statistics pganalyze already collects, with no direct database access required.

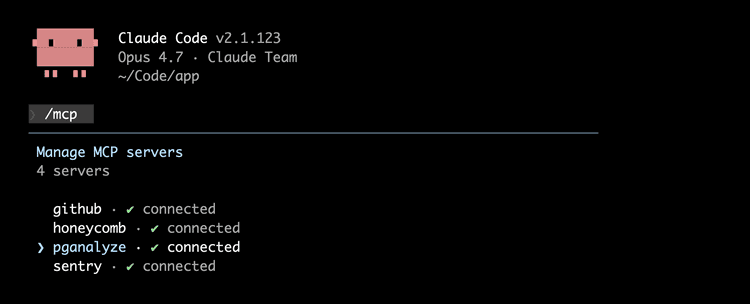

Today the pganalyze MCP Server moves from early access to public preview! Almost 100 companies took part in early access, with a select group of design partners providing in-depth feedback. It works with Claude Code, Codex, Cursor, and other MCP-compatible clients.

Before we walk through what is now available, it is worth asking the question that shaped this work in the first place: when an experienced engineer debugs a slow Postgres query in production, what information are they actually pulling together?

What an LLM needs to debug Postgres

One of the most common AI tool use cases we've heard as we've talked to customers, is accelerating incident response, and better triaging of slow queries in production. Many platform teams have long wanted engineers to self-serve production database issues, but interpreting query stats and EXPLAIN plans takes years of practice.

When an experienced engineer investigates a regression on a production Postgres database, running ad-hoc SELECT against the live data is usually a last resort. Most teams reach for continuously collected observability data instead. That distinction matters, because it is also the distinction between giving the model curated data vs giving the model something free form that can be dangerous, like a direct database connection.

Typical Postgres data sources for incident response

- Aggregated query statistics from

pg_stat_statements, to see which queries dominate runtime over a given window. - EXPLAIN plans (ideally historic information from

auto_explain) for the suspect query, to understand the planner's chosen access path. - Table and index stats from

pg_stat_user_tablesandpg_stat_user_indexes: dead tuples, scan counts, unused indexes. - Autovacuum activity from

pg_stat_progress_vacuumand the Postgres log. - Connection state and wait events from

pg_stat_activity. - Replication lag and replica health, whenever replicas are involved.

Tracking this data in the right way, and combining it with a capable AI tool can enable more engineers to act without escalating to the database team.

None of this requires reading sensitive customer data from tables. Much of the critical information lives in Postgres's statistics views, in metadata, and if you're using pganalyze, in monitoring data we already collect. An LLM with access to this statistical data can be genuinely useful for performance work, while staying far away from anything that could leak customer data or cause an incident of its own.

Why generic database MCPs fall short

There are a number of generic Postgres MCP servers readily available. Most of them work by taking a connection string, opening a session against the live database, and exposing tools that can run arbitrary SQL against your tables. That model is convenient for a local prototype. It is dangerous for production work.

In practice, three things go wrong: First, the model has no way to distinguish a small exploratory SELECT from a SELECT * over a sensitive table, so query parameters and result rows can leak personally identifiable information into the model's context. Second, statistics views like pg_stat_statements only expose cumulative activity, so the historical comparisons that matter for incident reviews are not really possible. Third, because the database itself becomes the work surface, even a well-instructed agent can put load on production at exactly the wrong moment.

The other part that's challenging is that there is not one Postgres MCP Server - there are many, and each presents its own challenges:

| Server | "Run a query" tool | Safety measure | Protects against writes? | Maintained? |

|---|---|---|---|---|

| pgEdge Postgres MCP | query_database | READ ONLY TRANSACTION | Yes | Yes |

| DBHub | execute_sql | Query keyword check | No (wCTEs, functions) | Yes |

| Anthropic's original Postgres MCP | query | READ ONLY TRANSACTION | Yes | No |

| CrystalDBA Postgres MCP | execute_sql | READ ONLY TRANSACTION + multi-statement check | Yes | Unclear |

AWS Labs pg-mcp-server | run_query | Query keyword check | No (EXECUTE in a DO block, functions) | Yes |

A purpose-built abstraction avoids all three failure modes by design, and does not depend on the model behaving itself when handed a connection string. That is the path we have taken with the pganalyze MCP Server.

This is not a hypothetical concern. When Robinhood publicly documented their MCP architecture, they explained why they built it on pganalyze rather than handing models a live database connection. As their engineering team put it: "Since there is no user-data being exported, pganalyze helps us remove any risk of data leaks."

How the pganalyze MCP Server works

What does that purpose-built abstraction look like in practice? The pganalyze MCP Server is a hosted endpoint that exposes a curated set of tools on top of the pganalyze GraphQL API. There is no direct connection to your database involved:

Instead, the model queries pganalyze, and pganalyze answers from the statistical data it already collects and stores in its internal workload repository. Because that data is continuous time-series, investigations can span days or weeks of history rather than only what is visible in the live moment.

Connecting from Claude Code looks like this:

claude mcp add --transport http pganalyze https://app.pganalyze.com/mcpOnce connected, we can simply run investigations and our AI tool will pick pganalyze tools automatically. Let's look at a few common workflows make this concrete.

To investigate a slow query, an AI agent can pull the top queries by runtime through get_query_stats, then drill into EXPLAIN plans for a regression using get_query_explains and get_query_explain. Here is a practical example with Claude Code:

But query statistics and EXPLAIN plans isn't all. To audit a database's health, it can read the current check-up status through get_checkup_status and surface unresolved alerts like insufficient VACUUM frequency or unused indexes. To find missing indexes on a hot table, it can run run_index_selection and get back the same Index Advisor recommendations you would see in the pganalyze app. The full tool reference lives in the docs.

Linking APM traces to EXPLAIN plans

If your application is instrumented with OpenTelemetry tracing, there is one more workflow worth calling out: get_query_explain_from_trace resolves a slow trace span directly to its EXPLAIN plan, bridging the gap between the APM tool where the slow request was first observed and pganalyze where the responsible query lives.

Here is an example of how this interaction can look like when combining the pganalyze MCP Server and the Honeycomb MCP Server:

I've heard reports of slow requests for log statistics in pganalyze recently. can you find a trace for that?

⏺ Let me investigate this using Honeycomb. I'll start by getting the workspace context and looking for relevant data.

...

⏺ Found plenty of slow traces. Let me get the trace details for the slowest one to see what's causing the latency. Here's what I found:

The LogStats GraphQL operation is indeed slow. Over the last 7 days:

┌────────────────┬──────────┐

│ Metric │ Value │

├────────────────┼──────────┤

│ Total requests │ 980 │

├────────────────┼──────────┤

│ P95 latency │ 4,343ms │

├────────────────┼──────────┤

│ Max latency │ 25,202ms │

└────────────────┴──────────┘

Example slow trace

...Can we look at the explain plan for a specific slow request?

⏺ I can see the trace has EXPLAIN Plan spans from the Postgres dataset. Let me use the pganalyze MCP tool to get the explain plan from this trace. I need the db.postgresql.plan attribute from the EXPLAIN Plan spans. Let me check the Postgres dataset for those span details.

⏺ honeycomb - run_query (MCP)(environment_slug: "production", dataset_slug: "postgres--pganalyze-",

...

⏺ I can see the 17,093ms EXPLAIN Plan span. Let me resolve it to the actual explain plan in pganalyze.

⏺ pganalyze - get_query_explain_from_trace (MCP)(serverId: "qlosi5joffcpfppzmz6aufoiga", datname: "pgaweb", fingerprint: "1012895bd5a59b5b", timestamp: 1772037133)

...

⏺ Here's the explain plan for the 18-second LogStats query:

EXPLAIN Plan (17,093ms runtime)

The query scans 8 weekly-partitioned log_stats_7d tables (Feb 18-25) via an Append node, filtering by database_id and a time range on occurred_at_1min.

Root cause: I/O bound on cold data

The total time is almost entirely I/O wait: 16,676ms out of 17,093ms (97.6%) is spent on disk reads.

┌───────────────────────┬───────┬───────────────┬────────────────────┬───────────────────┐

│ Partition │ Rows │ I/O Read Time │ Shared Blocks Read │ Shared Blocks Hit │

├───────────────────────┼───────┼───────────────┼────────────────────┼───────────────────┤

│ log_stats_7d_20260218 │ 2,628 │ 3,198ms │ 6,946 │ 0 │

├───────────────────────┼───────┼───────────────┼────────────────────┼───────────────────┤

│ log_stats_7d_20260219 │ 7,307 │ 2,846ms │ 6,808 │ 0 │

├───────────────────────┼───────┼───────────────┼────────────────────┼───────────────────┤

│ log_stats_7d_20260220 │ 7,305 │ 2,867ms │ 6,832 │ 0 │

├───────────────────────┼───────┼───────────────┼────────────────────┼───────────────────┤

│ log_stats_7d_20260221 │ 6,882 │ 2,678ms │ 6,378 │ 0 │

├───────────────────────┼───────┼───────────────┼────────────────────┼───────────────────┤

│ log_stats_7d_20260222 │ 7,118 │ 2,544ms │ 6,541 │ 0 │

├───────────────────────┼───────┼───────────────┼────────────────────┼───────────────────┤

│ log_stats_7d_20260223 │ 6,865 │ 2,538ms │ 6,426 │ 34 hit │

├───────────────────────┼───────┼───────────────┼────────────────────┼───────────────────┤

│ log_stats_7d_20260224 │ 8,256 │ 4ms │ 9 │ 7,475 hit │

├───────────────────────┼───────┼───────────────┼────────────────────┼───────────────────┤

│ log_stats_7d_20260225 │ 5,331 │ 1ms │ 2 │ 4,852 hit │

└───────────────────────┴───────┴───────────────┴────────────────────┴───────────────────┘

The pattern is clear: recent partitions (Feb 24-25) are hot in the buffer cache (sub-millisecond I/O), while older partitions (Feb 18-23) are cold and

require ~2.5-3.2 seconds each of disk reads. Since all 8 partitions are scanned sequentially, the cold reads compound to ~17 seconds total.

Each partition uses an index scan on database_id_idx but then reads a lot of blocks (6,000-7,000 per partition) because the data for a given database_id is spread across many heap pages.Controlling access and PII filtering

For interactive use cases, on first use, the client opens an OAuth flow against your pganalyze account. You can grant full read access, which returns everything including query text and EXPLAIN plans, or basic read access, which redacts sensitive content like query parameters and, by default, does not return EXPLAIN plans. The reason is that EXPLAIN plans typically embed real parameter values — so under basic read, they are only returned when the pganalyze collector is configured to normalize query samples.

That configuration is part of pganalyze's PII filtering, which happens before any data leaves your infrastructure. The collector runs in your environment and applies configurable redaction to query text, parameter values, and log lines before anything reaches pganalyze itself. For environments with strict data privacy requirements, we recommend the following configuration:

filter_log_secret: all

filter_query_sample: normalize

filter_query_text: unparsableWith this, the MCP server, and any LLM querying it, never sees raw query parameters or unsanitized log content from your production database, but you can still get normalized EXPLAIN Plan data, which is critical for many slow query investigations.

Whichever access level you pick, the MCP server respects the role-based permissions configured in your pganalyze organization, so an engineer who can only see staging will see only staging through the MCP server too. For headless agents and CI pipelines where OAuth is impractical, API key authentication is also supported.

This kind of integration is not new for our customers. Over the years we've been positively surprised by different teams building internal Slack bots, GitHub PR check agents, and platform tooling on top of pganalyze's GraphQL API. The MCP Server opens up that same data plane to agentic AI workflows: organizations can now stand up investigation agents and centralized MCP gateways powered by pganalyze, using off-the-shelf MCP clients.

How Notion used the pganalyze MCP Server to solve a multi-week production mystery

One of our early access partners, Notion, hit a database problem that demonstrates this approach far better than we could in the abstract. Arka Ganguli and the database engineering team at Notion ran into periodic slowdowns on a read replica that did not correlate with anything visible at the application layer.

In Arka's words:

We used the pganalyze MCP Server to solve a production mystery we'd been chasing for weeks: periodic slowdowns on one of our replicas that we couldn't correlate to anything on the application side. Using the pganalyze MCP Server and Claude Code, we were able to correlate the slowdowns to autovacuums running on a very large table on the primary. Near the end of each vacuum run in the vacuuming heap phase, there's a burst of heavy I/O that overwhelms the replicas. Overall, this was a great experience and I'm super excited to use it more in future investigations.

— Arka Ganguli, Engineering Manager, Notion

The shape of that incident is worth lingering on, because it is a textbook case of why historical, cross-source data matters. When autovacuum reclaims space from dead tuples, it dirties every heap page that contains them. On a large table with enough churn, that means a sudden surge of dirtied pages, each one shipped through WAL to every replica. The resulting replay can saturate a replica's I/O bandwidth, and it competes with read queries that have nothing to do with the table being vacuumed. Surfacing that correlation requires cross-source analysis with historical depth: exactly the kind of work the pganalyze MCP Server enables.

What's next and getting started

Public preview means the MCP Server is available today to pganalyze cloud customers on our current plans, with no wait list. Self-serve customers on legacy plans will need to upgrade to a current plan. Enterprise Server customers can expect support in the next major release. We currently enforce a rate limits of 100 tool calls per hour for each billable server, which is intended to support interactive use cases, while we learn more about real-world usage patterns for agent-driven flows.

Today the pganalyze MCP Server is best for triaging incidents, but we are not stopping there. We're working to optionally enable AI tools to use pganalyze Workbooks to test query variants and run EXPLAIN ANALYZE through the collector workflow, enabling comprehensive testing of different query rewrites, whilst recording information in pganalyze for easy reference.

Want a deeper walkthrough of how we think about the MCP server and AI tooling? Watch the recorded webinar from February.

Try it now

- Sign up or log in to pganalyze to connect from Claude Code, Codex, Cursor, or any other MCP-compatible client.

- Read the docs for setup instructions, authentication options, and the complete tool reference.

Get a demo or talk to us

- Schedule a demo for a customized 1:1 walkthrough with our team.

- Let us know what is working — we would love to hear how you are using the MCP Server in your own investigations, and what we should improve next.

With thanks to the Notion and Robinhood teams for sharing their stories publicly, and to every team who took the time to test this with us early.